Ternary-Quantized AI, From Silicon to Models

Ternary AI infrastructure for the real world.

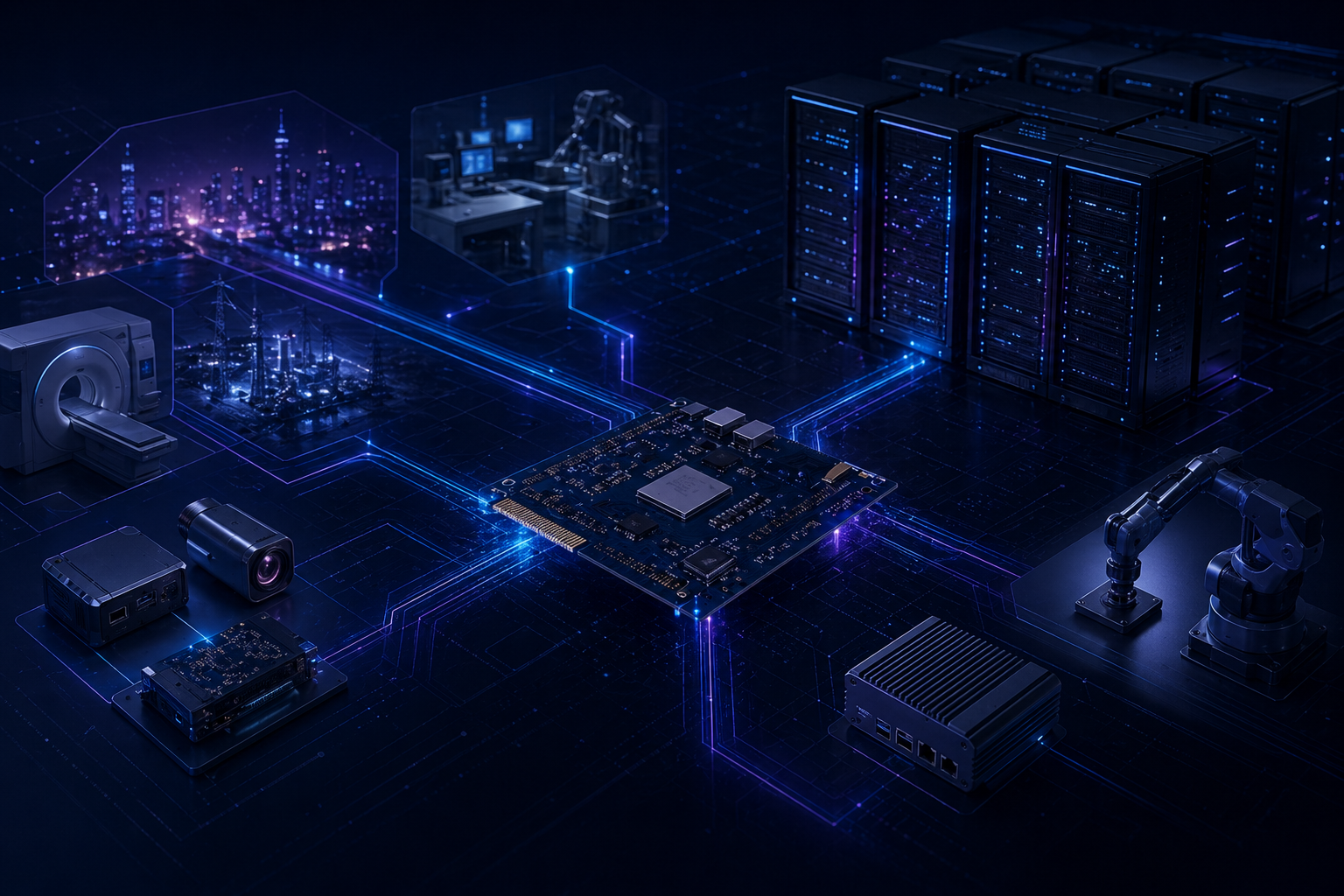

TaoTern builds vertically integrated AI systems for a future where inference must run closer to the data, under tighter power budgets, and with full operational control.

We combine custom acceleration hardware, open software, and ternary-optimized models to make high-performance LLM deployment practical beyond the data center.

Why TaoTern

Cloud AI was not designed for the environments that need intelligence most.

Most modern AI infrastructure assumes abundant power, centralized compute, and constant cloud access. That assumption breaks in enterprise, industrial, embedded, and sovereign deployments.

TaoTern is building the alternative: efficient AI systems that deliver serious inference capability where power efficiency, latency, and data control are non-negotiable.

Too much power

General-purpose compute wastes energy on inference workloads that should be purpose-built.

Too much dependence

Cloud-first deployment leaves critical systems exposed to latency, cost, and connectivity constraints.

Too little control

Sensitive inference often needs to run on-device or on-prem, not inside someone else's infrastructure.

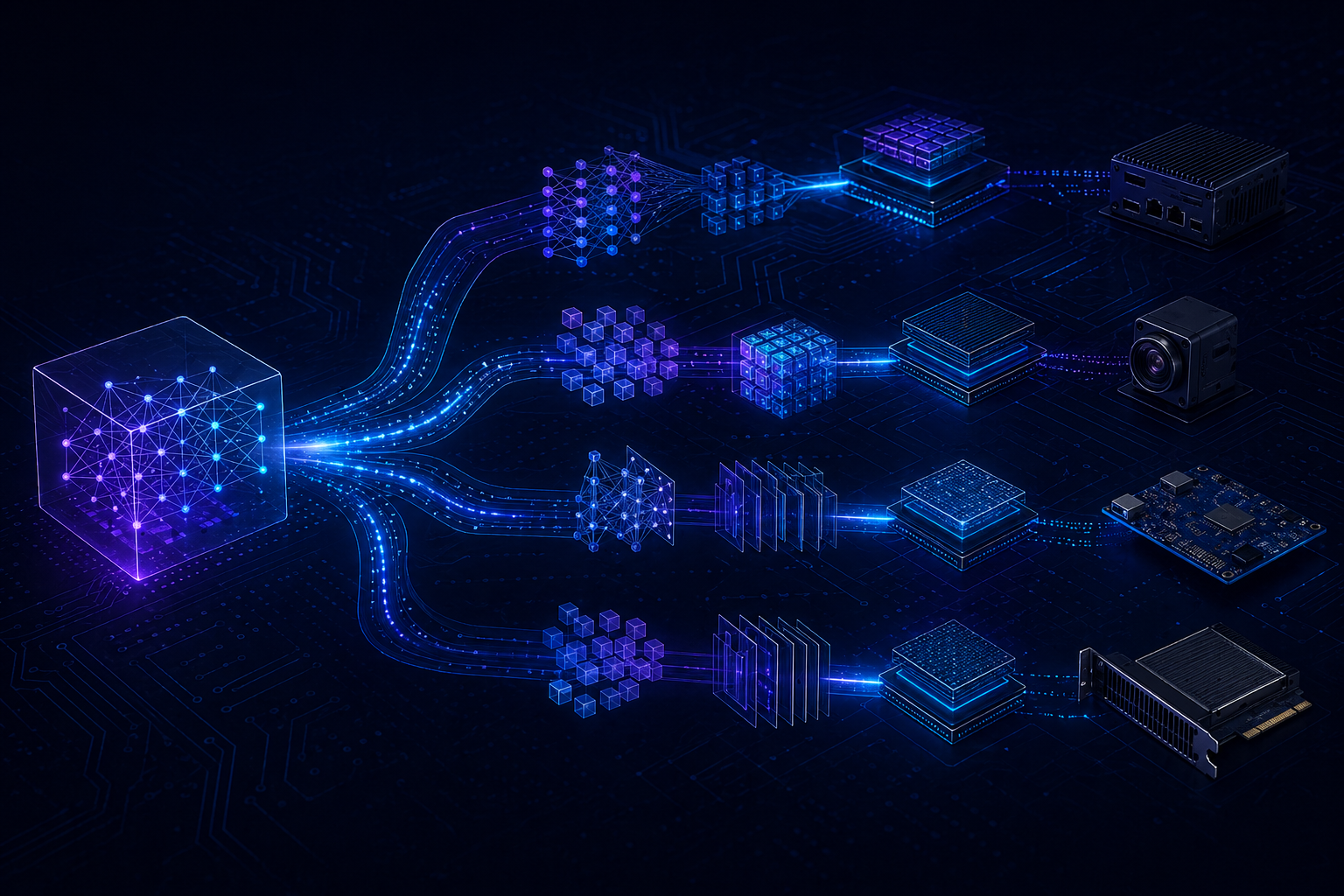

Platform

One stack, designed together for efficient sovereign inference.

TaoTern controls the critical layers of deployment performance: custom hardware, open execution software, and model architectures built specifically for ternary quantization.

That vertical integration lets us co-design around throughput-per-watt, predictable latency, and deployability in places where GPUs and cloud assumptions do not hold.

Silicon

TaoTern TPU

A Ternary Processing Unit engineered for quantized LLM inference, delivering strong real-time performance under a 3W power envelope.

Software

Open runtime ecosystem

Firmware, drivers, and a PyTorch-compatible execution layer built to make optimized deployment practical rather than experimental.

Models

Ternary-optimized LLMs

Custom architectures and transformation workflows designed for compact, efficient inference in edge, embedded, and enterprise environments.

Performance

Efficiency that changes where AI can actually run.

10x

TaoTern's optimization approach can transform existing LLMs into SSM-based architectures for up to 10x inference speedup, while our hardware path targets an order-of-magnitude improvement in energy efficiency over CPU-based inference.

Proof of Capability

A working baseline model that shows the architecture direction.

TaoTern's baseline model is a 30M-parameter, SSM-based, ternary-quantized LLM built for compact deployment and predictable inference behavior.

It is not the endpoint of the company. It is proof that the stack works, and a foundation for customer-specific fine-tuning, distillation, and transformation workflows.

Linear-time inference

SSM architecture supports efficient execution with tighter latency predictability.

Compact footprint

Ternary quantization reduces compute and memory pressure for deployment beyond the cloud.

Adaptable workflow

Serves as a base for custom training, distillation, and model transformation.

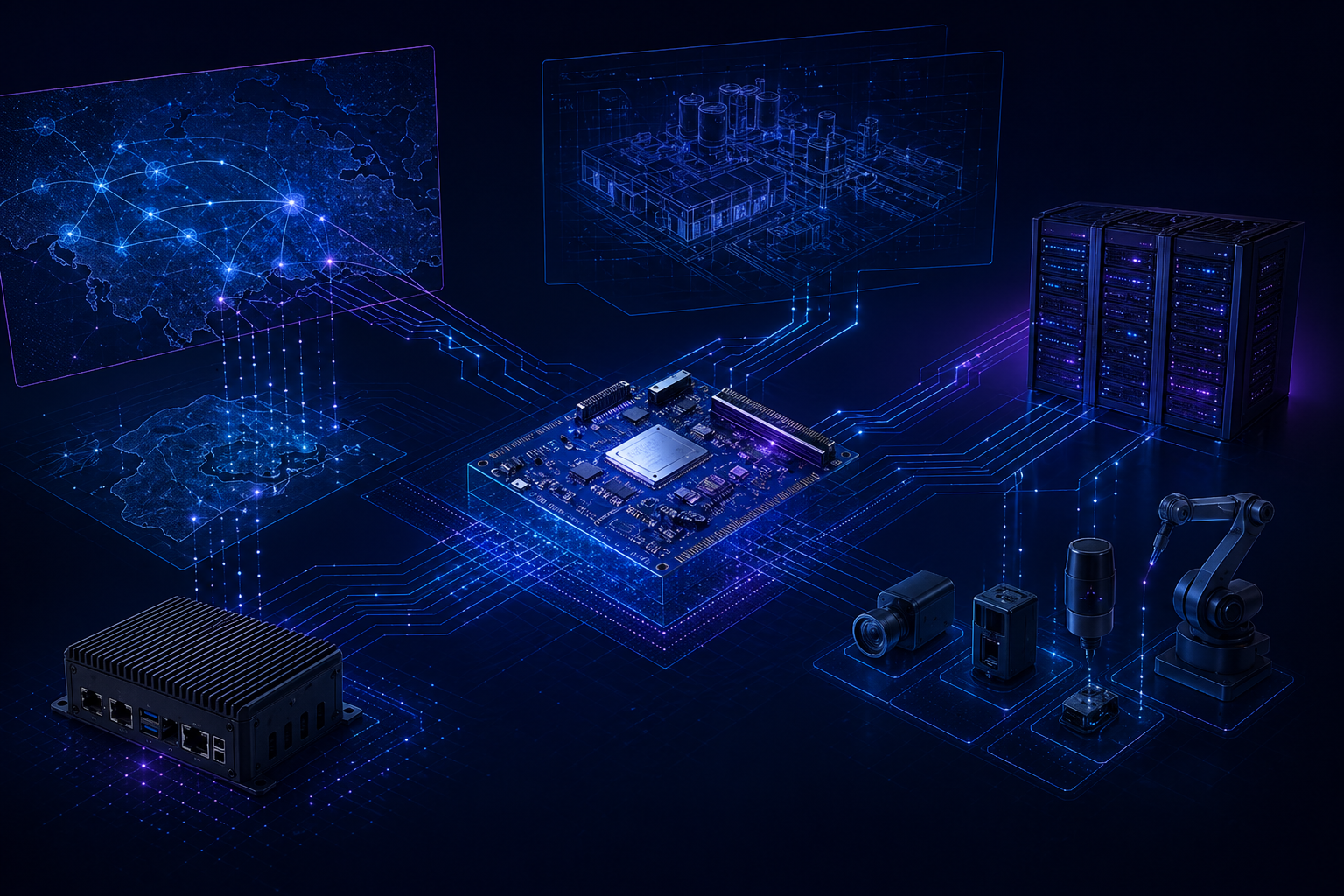

Use Cases

Built for environments where data, power, and latency are strategic constraints.

Edge and embedded systems

Run practical language inference in devices and systems that cannot tolerate GPU-scale power draw.

On-prem enterprise AI

Keep inference close to sensitive data with controlled infrastructure and long-term cost discipline.

Offline and sovereign deployment

Support mission-critical workloads where cloud connectivity is limited, undesirable, or impossible.

How We Work

From deployment constraints to production inference.

Discovery

We define model goals, deployment boundaries, power budgets, and latency targets with your team.

Model strategy

We train, fine-tune, distill, or transform existing models into more efficient architectures.

Deployment

We deploy remotely or on-site with support across inference hosting and TPU server rollouts.

Contact

Build efficient AI where your data lives.

TaoTern is building the infrastructure layer for real-world inference: efficient, sovereign, and deployable outside the hyperscaler template.